When artificial intelligence is used to assist in critical decisions like medical diagnoses, it's often compared to human experts to see who performs better. But a new study reveals that these comparisons can be misleading, potentially overstating AI's advantages due to statistical quirks rather than true superiority. This matters because such flawed comparisons could lead to over-reliance on AI in areas where human judgment remains crucial.

Researchers discovered that when multiple human decision-makers use the same underlying model but different thresholds for making decisions, their aggregated performance appears worse than AI's when plotted on standard performance charts. This happens due to a mathematical phenomenon called Jensen's inequality, where the average of individual performances falls below the curve representing their collective potential. Essentially, humans might be as capable as AI, but their varied approaches make them look less effective when combined.

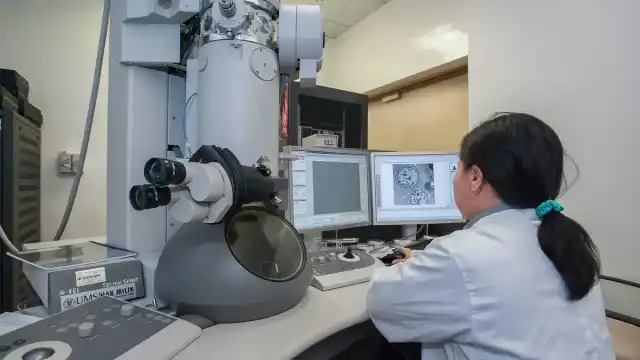

The study analyzed a large dataset from high-risk pregnancy diagnoses in China, involving over a million observations and 300 features like medical tests and patient history. They compared AI-generated predictions against doctors' diagnoses, using Receiver Operating Characteristic (ROC) curves—a common tool to visualize trade-offs between correct and incorrect predictions. By examining individual and aggregated human decisions, they tested how incentive differences and information access affect comparisons.

Results showed that when doctors' individual decision points were plotted, they often aligned with the AI's ROC curve, but their combined average fell below it. This indicates that human heterogeneity, not inferior skill, drives the apparent performance gap. For instance, if each doctor uses a slightly different risk threshold, the group's overall true positive and false positive rates dip below the optimal curve, making AI seem dominant even when it isn't.

This finding is vital for real-world applications, such as healthcare or finance, where AI is increasingly deployed alongside humans. Misinterpreting these comparisons could lead to undervaluing human expertise or implementing AI systems that don't account for nuanced decision-making. For regular readers, it underscores the importance of questioning headlines that claim AI 'outperforms' humans, as the reality may be more complex.

Limitations include that the analysis assumes humans and AI use similar information, which may not always be true if doctors have unrecorded insights. Also, the study focuses on statistical properties without fully exploring how cultural or contextual factors influence human decisions, leaving room for further research on when AI truly excels.

Original Source

Read the complete research paper

About the Author

Guilherme A.

Former dentist (MD) from Brazil, 41 years old, husband, and AI enthusiast. In 2020, he transitioned from a decade-long career in dentistry to pursue his passion for technology, entrepreneurship, and helping others grow.

Connect on LinkedIn